Evaluation (2019-03-21)

Tagged as: evaluation

Group: A

Evaluation of the Regensburger Usability Platform

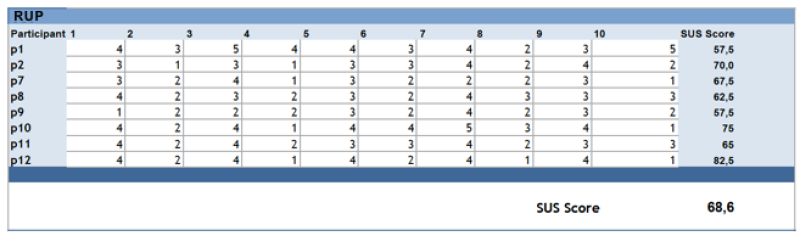

RUP:

- SUS Score: 68,6 → offers acceptable usability (according to Bangor, Kortum and Miller (2009)

- Feedback from our participants:

- Positive

- Clear Structure

- Reduced Functionalities

- Easy to Learn

- Feels reliable, unambiguous and conclusive

- Major Usability Issues:

- OBS-Setup has to be supported by clear instructions

- Browser-Back should be supported

- Chat: Missing notifications for incoming chat messages

- Chat: Automated scrolling with the latest chat history

- Annotations: Workflow of the annotations should be changed: add timestamp first and entry afterwards

- Annotations: Connect annotation with current task

- Creating a test: test should be saved before adding single tasks

- Creating a test: tests and tasks should be editable

- Creating a test: It should be possible to start tests right after creating them

- Most importantly: Missing Feedback

- Feedback is missing for almost all implemented functions (saving/deleting a test/task, adding an annotation, subject completes task…)

MORAE:

- SUS-Score 64,1 → according to Bangor et al. (2009) Morae offers a usability between being okay and good

- Feedback from our participants, major issues (will not be shown in detail as the main purpose of the paper is the evaluation of the RUP):

- Outdated look and feel of the tool

- Unstructured, low predictability

- Many functions, not structured conclusively (p.ex. nested tabs, hierarchy and placement of elements, highlighting of elements)

- At least two screens have to be used by the test supervisor to not get confused during the test

- No notifications for incoming chat messages

Aaron Bangor, Phil Kortum, and James Miller. 2009. Determining What Individual SUS Scores Mean: Adding an Adjective Rating Scale. J. Usability Stud. 4 (04 2009), 114–123. John Brooke. 1995. SUS: A quick and dirty usability scale. Usability Eval. Ind. 189 (11 1995).